MasterControl AI Trust Centre

Welcome to MasterControl's AI Trust Centre, your resource for understanding how we responsibly develop, implement, and govern our artificial intelligence solutions with transparency and ethical standards at the forefront. Our commitment to building trustworthy AI technologies is demonstrated through rigorous testing, continuous oversight, and adherence to industry-leading privacy practices. Our goal is to ensure that our innovations enhance your business while protecting what matters most.

Our Core AI Principles

MasterControl is committed to responsible AI specialised for life sciences industries to ensure your usage of AI is aligned with global regulatory requirements, guidelines, and standards.

Compliant

At MasterControl, we implement comprehensive security controls throughout our AI development lifecycle, employing advanced threat modeling, continuous monitoring systems, and rigorous access controls to safeguard our AI infrastructure against emerging vulnerabilities and ensure our clients can trust the security foundation upon which our intelligent solutions are built. Learn More.

Secure

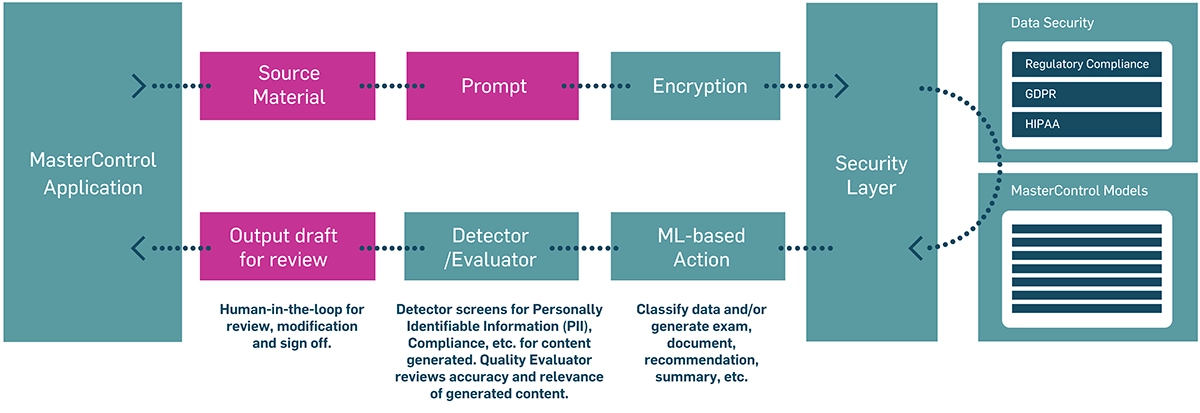

Data privacy and security are critical to you, especially in highly regulated industries. That’s why as an expert in life sciences, MasterControl has built our secure agentic AI platform. It includes a system of customised large language models (LLMs), services, and programmatic agents all strictly governed and administered by MasterControl on our compliant platform. MasterControl does not utilise third party services to provide AI functionality. This means your data never leaves our secure and validated system for processing. Learn More.

Trustworthy

MasterControl is committed to responsible, ethical AI development and deployment. Every intelligent solution we develop undergoes rigorous evaluation against industry standards and our own stringent principles, demonstrating that when you partner with MasterControl, you're choosing a company that values patient safety, risk management, transparency, accountability, and continuous improvement. Learn More.

Compliant

MasterControl owns our full AI technology stack, from agents, to models, to processing. Our AI platform adheres to industry standards including 21 CFR Part 11, EU MDR, GDPR, and HIPAA, where applicable. In addition, MasterControl is compliant with the following ISO standards:

Secure

MasterControl's commitment to AI security and data privacy encompasses robust protection mechanisms that safeguard both our intelligent systems and customers' sensitive information. We do not use third party large language models (LLMs) to provide AI functionality. We implement comprehensive technical guardrails, enforce strict data governance protocols, and maintain transparent privacy practices that not only comply with global regulations but empower our customers to be able to leverage AI capabilities while maintaining complete confidence in how their critical data is protected, used, and managed.

Trustworthy

MasterControl is committed to responsible, ethical AI development that assists humans. Overall strategy places onus for utilisation of AI outputs on the user, with an emphasis on a human-in-the-loop approach. Users are able to review the input information in comparison to the output within the application to verify the validity. MasterControl’s AI features do not perform any decision making tasks. Click here for more information about MasterControl's AI Responsible Use Principles.

Frequently Asked Questions (FAQs)

Does MasterControl comply with AI regulatory guidance for vendors?

MasterControl owns our full AI technology stack, from agents, to models, to processing. Our AI platform adheres to industry standards including 21 CFR Part 11, EU MDR, GDPR, and HIPAA, where applicable. In addition, MasterControl is compliant with the following ISO standards:

• ISO 9001 – Quality Management

• ISO 27001 – Information Security

• ISO 27017 – Cloud Security

• ISO 27701 – Privacy Information Management

• ISO 42001 – Artificial Intelligence Management Systems (AIMS)

Is your solution 21 CFR Part 11 compliant for AI-generated outputs?

Yes. MasterControl is specifically designed to comply with all requirements of Part 11.

How does MasterControl ensure that its AI capabilities are developed and deployed ethically?

MasterControl is committed to protecting customer data. Customer inputs remain private, are not shared with others, and are never used to train our production models. All data is securely processed within our environment, and customers retain full control over their data.

Do you have formal AI governance and risk management processes?

Yes. MasterControl has an established and certified AI Management System (AIMS) in compliance with IS0 42001 standards.

Is usage of MasterControl Generative AI optional?

MasterControl AI capabilities are designed to be user-invoked and do not run automatically in the background. Some AI features are available as standalone tools with controlled access, while others are embedded within product workflows.

For embedded features, AI is only activated through explicit user actions (such as clicking a button or initiating a search) and operates within existing user permissions. AI capabilities assist users in generating insights or content, but do not independently take actions or make decisions within validated workflows.

Users remain in control of whether and how AI-generated outputs are used, and all actions taken within the system remain subject to standard user accountability and auditability.

What internal roles or committees oversee AI risk, compliance, and ethical use?

Oversight is performed by MasterControl’s AI management committee (AIMC) that evaluates new AI capabilities and material changes to existing ones before release.

AIMC is a cross-functional operational governance unit that includes representatives from engineering, product, security, privacy, compliance, quality, legal, and regulated-domain subject matter experts. This governance structure is responsible for defining acceptable AI use cases, reviewing risk, overseeing validation expectations, approving release controls, and monitoring ongoing production behavior.

Is customer data used to train MasterControl LLMs?

No. However, if that were to change in the future, any products or features that would directly use customer data to train a shared generative model would be optional and any such training would only be done with a customer’s consent.

How do you ensure data segregation between customers?

MasterControl deploys individual resources to customer instances with a lean local deployment of the AI model. MasterControl links this to each customers individual S3 bucket and EC2 instance provisioned by the IaaS provider.

Is AI input/output data stored, logged, or retained beyond the immediate user session?

AI input and output handling follows defined retention and logging policies. Operational logs may capture limited metadata required for reliability, security monitoring, abuse detection, debugging, and auditability, such as timestamps, request identifiers, feature identifiers, model version, error state, and system telemetry. Unless explicitly configured and disclosed otherwise, customer business content submitted to AI features is not retained longer than necessary to provide the service and support defined operational purposes. Any retention of prompts or outputs for troubleshooting or auditing is subject to documented retention periods, restricted access, and security controls.

Are AI prompts or outputs accessible to MasterControl personnel for troubleshooting, monitoring, or model improvement?

MasterControl collects feedback to fine-tune or adapt and reinforce the model via Supervised Finetuning (SFT). MasterControl’s Artificial Intelligence Management Committee (AIMC) coordinates with the Security Operations Group (SOG) for incident handling associated with AI systems. However, customer data is not accessible to MasterControl personnel for model improvement or general review. Any exceptional access for incident investigation or support is controlled, documented, and performed under applicable confidentiality and security obligations.

How do you ensure response accuracy and relevance?

Our generative AI solutions are built upon architecture, like Retrieval-Augmented Generation (RAG) and Knowledge Graph (KG). This addresses this fundamental challenge by creating a system that never guesses. We apply quality thresholds before delivering outputs. Our approach meticulously retrieves, verifies, and presents information from reference points, ensuring human-in-the-loop review and approval of generated information.

How are AI features reviewed, approved, and re‑validated for use in regulated (GxP) workflows?

Reviews cover intended use, data sources, model selection, validation results, known limitations, privacy and security implications, human oversight requirements, and customer impact. All MasterControl AI features assist users in generating insights or content and are designed with a human-in-the-loop approach, but do not independently take actions or make decisions within validated workflows.

How are changes to AI behavior communicated to customers?

Change management for AI follows formal software lifecycle and release management procedures. Model changes, prompt/template changes, retrieval pipeline changes, policy logic changes, and infrastructure changes are reviewed under documented change control. Changes are assessed for risk, tested in non-production environments, validated against predefined acceptance criteria, and approved before deployment. Material changes that could alter functionality, output characteristics, risk posture, or customer obligations are tracked, versioned, and communicated through standard customer communication channels as appropriate. AI functionality is positioned with clear intended use and operational boundaries. Customers remain responsible for applying their own validation, procedural controls, and quality-system review where required by their regulatory context. MasterControl supports this by documenting the feature, its limitations, and the controls used to manage risk and change.

How are AI models assessed for bias and how is bias mitigated over time?

Bias assessment is incorporated into model evaluation and operational monitoring as part of the broader AI risk management process. The specific bias evaluation approach depends on the AI use case, the data types involved, and the impact of the output. For enterprise regulated workflows, bias analysis focuses less on protected-class consumer profiling and more on systematic error patterns that could create unfair, inconsistent, or misleading outcomes across user groups, content categories, geographies, document types, language variants, or regulated interpretations.

Before release, models and AI workflows are evaluated using representative test sets and scenario-based validation. Testing considers whether the system behaves consistently across relevant categories such as language, terminology variation, geography-specific phrasing, document formats, process context, and user-role context. The evaluation also looks for undesirable tendencies such as overconfident unsupported responses, inconsistent handling of similar inputs, skew toward dominant training or retrieval patterns, and variability in regulated-language interpretation.

How are MasterControl AI models developed, tested, validated, and updated.

AI models and AI-enabled features are developed under a controlled software and machine learning lifecycle. Development begins with a defined intended use, input and output boundaries, risk assessment, security review, and acceptance criteria. The chosen model architecture, retrieval design, orchestration logic, and output controls are documented. For higher-risk or regulated use cases, the design also specifies what source evidence is used, what user review is expected, and what the system is not permitted to do.

How often are MasterControl models refreshed or retrained?

There is no specific cadence for refreshing or retraining a model. We monitor accuracy, hallucinations, performance, etc, and remain active in the model development community (e.g., we have contributed several models on Huggingface). We try various models with different quantized instances to assess whether an application might be better served with a different model/variation.

How are AI errors detected, escalated, remediated, and communicated to customers?

MasterControl deploys guardrails designed by its AI/ML Agile development team, utilizing the following key metrics:

-Truthfulness – for preservation of factual correctness

-Relevance – to keep the application free of hallucinations

-Duplication and repetitiveness

-PII detection and alerting

-Removal of entries in generated responses not mentioned in the context

If any of these metrics above drop below an established threshold, the issue is triaged so the source of the problem can be determined. A ticket and associated documentation is created in our engineering systems of record.

The AIMC coordinates with the Security Operations Group (SOG) and Privacy Team for handling and communication of incidents associated with AI systems. Interested parties (including external parties) may report adverse AI impacts to [email protected] or [email protected], and the AIMC will facilitate investigation. Additionally, applicable legal reporting obligations are determined by the AIMC and SOG as well.

What is MasterControl's incident response process if AI produces materially incorrect or misleading outputs?

If materially incorrect or misleading outputs occur, the issue is triaged and severity-classified; affected functionality may be restricted or disabled; root cause analysis is performed; and fixes are deployed via controlled change management (prompt, retrieval, or model rollback).

Our Artificial Intelligence Management Committee (AIMC) coordinates with the Security Operations Group (SOG) and Privacy Team for handling and communication of incidents associated with AI systems. Interested parties (including external parties) may report adverse AI impacts to [email protected] or [email protected], and the AIMC will facilitate investigation.

Do MasterControl customers have their own MasterControl LLM?

Currently, we do not offer LLMs for specific customers. While model(s) are centralized, customer data and outputs are never shared across customers.

Trust by Design: Secure and Responsible AI for Regulated Industries

Learn How MasterControl’s ISO 42001 Certified Framework Ensures Transparency, Accountability, and Confidence in Every AI Decision.

Download Now