GxP Lifeline

Despite a Focus on Risk Management, Why Do Unthinkable Things Continue to Happen?

In August 2018, the seemingly impossible occurred in Genoa, Italy. A bridge that had stood for a relatively short 50 years collapsed killing 43 people. The reason was a lack of maintenance of the bridge.

This happened on the back of the collapse of a pedestrian bridge at Florida International University in March 2018 just prior to its opening, that killed six people due to a design flaw.

I could actually spend the entire article listing incidents and disasters where, in the aftermath, people asked, ‘How could this happen?’ Deepwater Horizon; the Grenfell fire in the U.K.; the Dreamworld ride tragedy in Australia; the head-on collision of two trains in Germany – the list is long and continues to grow. And so does my frustration.

The reason for this frustration? We have more risk management now than we have ever had before. We now have an international standard that is supposed to provide a more cohesive approach to the management of risk. But even with all of this focus, the unimaginable continues to occur.

There are several factors that I believe contribute to this:

- The definition of risk is not helpful;

- Risk ownership is at the wrong levels of the organization; and

- The manner in which likelihood is determined is inappropriate.

Unless there is a fundamental shift in these basics, risk management is going to be considered to have failed when these tragedies occur, which, in turn, threatens the very credibility of the risk management profession and those that work within it.

Risk Definition

It may seem obvious but fundamental to the effective management of risk is an understanding of what a risk is.

From the outset, let me be frank, I consider the definition of risk management in ISO 31000 (the effect of uncertainty on objectives) to be confusing, and, utterly ineffectual as a definition. Effect is defined as “a change which is a result or consequence of an action or other cause.” In essence, an effect is an outcome or consequence, so if we substitute that into the definition it becomes the consequence of uncertainty on objectives.

This definition, in my view, does not capture the essence of what the risk is: the event we are trying to avoid. To me, there needs to be a real focus on the actual thing we are trying to stop: in other words, seeing a risk as a potential event, as shown in the definition I have developed:

“A possible event or incident that, if it occurs, will have an impact on the organization’s objectives.”

This definition focusses on the event, recognizing that there is a possibility it can occur (likelihood) and, if it does occur, it will have an impact (consequence) on the organization’s objectives.

Risk Ownership

Currently, in all the organizations I have worked with, risk ownership is assigned to the lower levels in the belief that that is where the incidents occur, so that is where the risks should be owned.

On the surface, that seems appropriate as it is likely that if something does happen, it will occur in a specific functional area, so appointing functional managers as owners seems logical. This, however, is not the case for two primary reasons:

- The controls associated with the risk they have been given ownership of are likely to be owned by managers at higher levels of the organization; and

- It is unlikely, therefore, that these functional managers have the necessary level of authority to ascertain whether the controls associated with the risk are effective which means that it is not possible for them to assess the likelihood of the risk.

My biggest issue in relation to driving risk ownership to the lower levels of the organization is the fact that the controls that are controlling the risk are owned for the most part at the corporate level. This means that those given responsibility for the ownership of the risk do not own any of the controls that are reducing the likelihood and/or the consequence of the risk. More importantly, they have no visibility of the effectiveness of those controls and no authority to enquire as to their effectiveness.

Likelihood Is Not Based on Time, Frequency or Probability

The inadequacies of the risk definition and the pushing of ownership to the lower levels of organizations are things that I believe to need to change. That said, I believe the most important change to the management of risk needs to be how we assess likelihood.

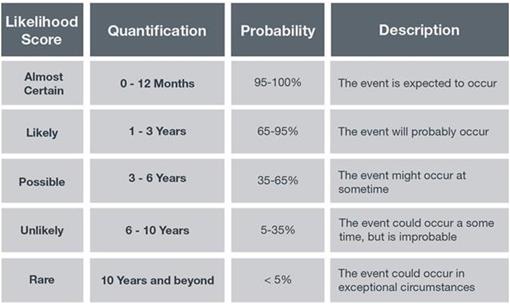

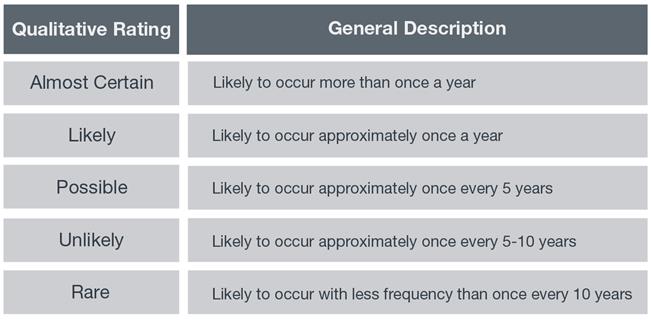

Assessing the level of likelihood for risk is something I have been questioning for some considerable time. I have followed the conventional wisdom up until this point and used the traditional criteria to express likelihood. You may have criteria similar to the examples shown below:

These descriptors, whilst standard across the industry, have not sat well with me for some time, but I was unsure why. That was until recently when it hit me like a proverbial ton of bricks. You can’t assess the likelihood of events using time, frequency or probability as the measure, when those three variables have no influence on whether a risk materializes or not. Yes, you heard correctly; you cannot determine how likely it is that an event will occur using time, frequency or probability as the measure.

To illustrate, let’s look at a few risks that I have developed for organizations:

- Contaminated food consumed by restaurant patrons.

- A worker or member of the public exposed to unbonded asbestos.

- A wrong medication administered to a patient.

- An explosion at fuel storage depot.

- A Legionnaires’ disease outbreak in a hospital.

- An unauthorized release of or alteration to client confidential information.

The likelihood of these risks occurring is in no way going to be based on time, frequency, or probability. The likelihood of these risks is going to be based on one thing and one thing only: the effectiveness of the current control environment.

So, let’s take one of these risks - contaminated food consumed by restaurant patrons as a case study. We have two restaurants, neither of which has had a reported incident of contaminated food being served in the last seven years. Using the criteria below, we assess the likelihood and determine that the likelihood in both restaurants is unlikely.

But is that the case?

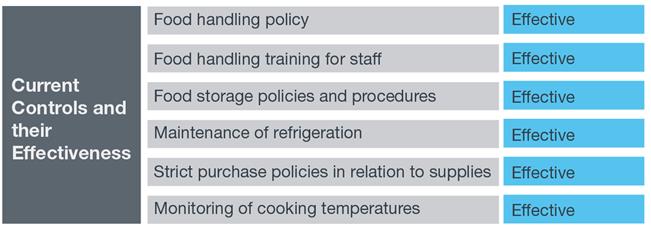

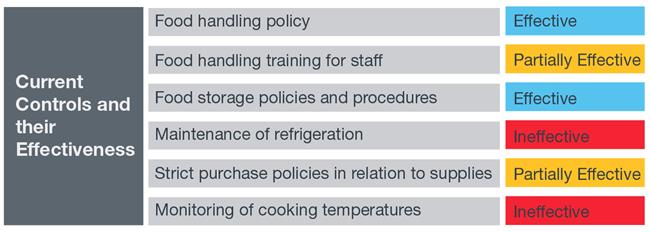

We conduct an audit on each of the restaurants, with the following results:

Restaurant 1

Restaurant 2

Despite the obvious difference in the effectiveness of the control environment, using time and frequency to determine likelihood would still see them assessed at the same level.

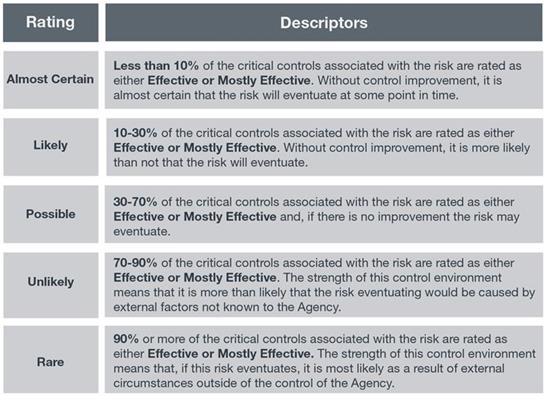

So, what if we were to use a likelihood matrix such as this instead?

We then assess the likelihood based on the observations of the effectiveness of the controls the likelihood of contaminated food being served to restaurant patrons would be significantly higher at restaurant 2 than at restaurant 1.

If we continue to try and estimate likelihood based on frequency for risks where the likelihood is actually dependent on the effectiveness of the controls, the decisions we make are likely to be flawed.

The key to gaining an accurate assessment of likelihood, therefore, is to understand the controls and their effectiveness.

Let’s go back to the very first example in this article – the collapse of the bridge in Genoa. If we were to assess the likelihood of the bridge collapsing, we would assess it as rare given that, up until that point it had never collapsed.

As reported in the New York Times on Sept. 6, 2018,:“The southern supports that initially went slack are the same in which a structural engineering professor at the Politecnico di Milano, Carmelo Gentile, found troubling signals of corrosion or other possible damage in tests performed last October.

“He warned the company that manages the bridge, Autostrade per l’Italia, or Highways for Italy, but he said that it never followed up on his recommendation to perform a fuller computer study and to outfit the bridge with permanent sensors.

“Probably they underestimated the importance of the information,” Professor Gentile said in an interview.

“Barring the emergence of further evidence, said Vijay K. Saraf, principal engineer at Exponent, an infrastructure and construction consulting firm in Menlo Park, Calif., “everything you have is consistent with the failure of the south stays.”

Had an assessment been done on the basis of control effectiveness and not time, frequency or probability, there might have been a very different view of likelihood and the tragedy may have been averted.

In summary, risk management needs to change. In particular, we need to move from “doing risk management” and start managing risk.

.jpg?sfvrsn=944d6fcc_10)